Transparency & Methodology: To provide our research and M&A technical evaluations, we partner with marketplaces. If you click a link and make a purchase, we may earn a commission. We only recommend products, services, or assets that meet our technical standards.[ Learn more about our review process.]

Modern cybersecurity is characterized by a level of volatility and volume that renders complex security mechanisms. As adversaries refine their tactics and procedures, organizations must pivot toward automated cybersecurity intelligence. While large language models provide processing of unstructured data, their architecter can have inherent latency and hallucination risks. In high-stakes Cyber Threat Intelligence environments, where outdated intelligence equals vulnerability, this is unacceptable.

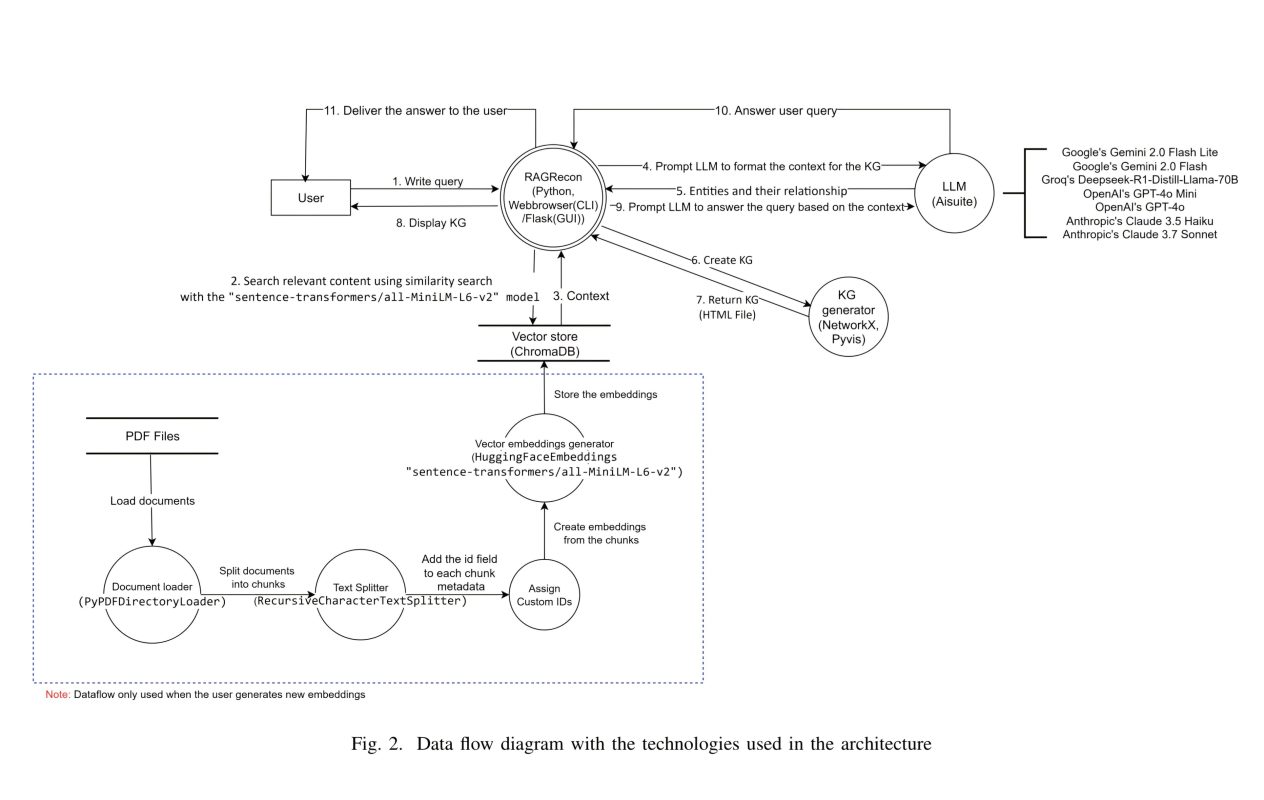

RAGRecon is architecter is designed to mitigate risks by integrating LLMs with Retrieval-Augmented Generation. RAG provides a robust tool for cybersecurity operations. By shifting from purely parametric memory or static knowledge to non-parametric memory or real-time CTI ingestion. RAGRecon enables the protection of critical infrastructure against advanced persistent threats and sophisticated blockchain exploits, such as smart contract vulnerabilities. The Rag framework ensures that security decisions are grounded in the current ground truth, rather than the model’s training-cutoff knowledge. To achieve reliability, the system employs a rigorous multi-stage pipeline designed for semantic precision.

READ MORE: Is This “Humanity’s Last Exam”… For Language Models?

Technical Architecture of Data Ingestion and Semantic Vectorization

The utility of a Cyber intelligence system is predicated on the quality of its ingestion pipeline. In CTI, the transition from raw PDF reports to queryable data must be handled with architectural rigor. To ensure security, the semantic context, the why and how of a threat, is preserved during retrieval.

The RAGRecon processing pipeline executes the following workflow:

- Document Loading: Utilizing

PyPDFDirectoryLoader. The system ingests PDF assets, treating each page as a discrete object to maintain metadata (source filename and page numbers). - Text Chunking: Managed via

RecursiveCharacterTextSplitter. Documents are fragmented into 1000-character segments with a 100-character overlap. Crucially, the system utilizes a hierarchical list of separators—(["\n\n", "\n", "(?<=\. )", " ", ""])to ensure splits occur along logical semantic boundaries such as paragraphs and sentences, rather than mid-concept. - Custom Metadata/ID Generation: Each segment is assigned a unique identifier following the schema

filename_p<page>_c<chunk>. This enables precise context reconstruction, allowing analysts to trace intelligence back to the exact paragraph of the source report.

For vectorization, the system employs the all-MiniLM-L6-v2 model to generate high-dimensional numerical embeddings. These representations are stored in ChromaDB, facilitating semantic similarity searches rather than brittle keyword matching. This architecture addresses information overload by allowing the system to identify relevant context. Its done so even when a query’s terminology varies from the source text or is pivoting through the subnet.

Interactive RAG and the Explainability Layer

The black box nature of standard LLMs is a primary barrier to SOC adoption. In cybersecurity, transparency is a non-negotiable requirement for decision-making. For Transparency, RAGRecon addresses this through an Explainable AI (XAI) framework that follows a RAG-Sequence approach. The sequence performs while retrieving a curated set of document chunks once to generate a coherent response.

The system utilizes a dual-output architecture to generate two distinct types of intelligence from the top-K=6 retrieved context chunks:

- Textual Responses: Natural-language syntheses grounded strictly in the retrieved data.

- Knowledge Graphs: Interactive visual maps of entities (nodes) and their relationships (edges), extracted via structured JSON (Subject-Relationship-Object).

This visual intelligence layer effectively solves the wall of text problem in dense security documentation. For example, when queried about Broken Object Level Authorization, the KG visually links BOLA to API identifiers and unauthorized access. By observing the links, an analyst can see that a vulnerability arises from an API flaw without scanning dozens of pages. The factual map fosters trust by allowing analysts to verify the rationale behind the AI’s output. The Trust enables more precise follow-up questioning, reducing the cognitive load required to model complex threats.

Performance Benchmark In Reliability and Factual Accuracy Metrics

High-value cybersecurity intelligence requires a strict balance between comprehensive retrieval and factual precision. RAGRecon’s reliability was evaluated using two primary indicators, utilizing a rigorous four-step calculation methodology to minimize hallucination.

Faithfulness Measures The Ratio of Statements Supported By Context

Calculation

- LLM breaks responses into statements.

- LLM verifies statements against context.

- Tally Yes/No verdicts.

- Calculate the ratio.

Context Relevance Measures The Ratio of Useful Sentences Within Retrieved Context

Calculation:

- LLM extracts relevant sentences.

- Count relevant sentences.

- Count total sentences in context.

- Calculate the ratio.

Experimental Results Analysis

The following findings are based on a dataset of 2,050 automated decisions that were manually verified to establish statistical significance:

| Metric | Observed Performance | Strategic Impact |

| Faithfulness Score | Consistent > 0.8 / 1.0 | High reliability; exceptionally low hallucination rate. |

| Context Relevance | ~8% Average Utilization | High efficiency; successfully filters noise from large reports. |

| Manual Verification | 90% – 97% Accuracy | Validates the reliability of the self-evaluation methodology. |

A faithfulness score exceeding 0.8 is critical for the cybersecurity of digital infrastructure. In a live SOC environment, a single hallucinated threat could lead to catastrophic resource misallocation. The manual verification of over 2,000 decisions confirms that the system’s internal self-evaluation remains a robust metric for operational deployment.

Comparative Analysis of LLM Performance

To determine the optimal engine for CTI tasks, seven models were benchmarked across Conventional CTI (24 reports) and Blockchain CTI (28 reports) datasets.

- Model Spectrum: Google (Gemini 2.0 Flash/Lite), OpenAI (GPT-4o/Mini), Anthropic (Claude 3.7 Sonnet/3.5 Haiku), and Groq (Deepseek-R1-Distill-Llama-70B).

- Consistency Benchmarking: The locally-hosted Mistral 7B model was utilized as a benchmark for self-evaluation consistency. Demonstrating high reliability across multiple runs.

- Dataset Variance: A slight performance advantage was noted in the Blockchain CTI domain. Two reasons for this, the smaller size of the dataset and the possibility that model training data was weighted toward blockchain documentation.

Architectural Insight: A significant finding involves the 20 billion parameter threshold. Models below this size frequently exhibited Formatting Errors when attempting to generate the JSON required for Knowledge Graphs. For organizations seeking to automate visual explainability, deploying models with at least 20B parameters is a hard technical requirement to ensure consistent KG generation.

Read More: DRAFT-RL | First LLM Evaluation Framework to Integrate Structured Reasoning with Multi-Agent RL

Conclusion and Future Implications

The performance analysis of RAGRecon confirms that the integration of RAG transforms unstructured security data into explainable intelligence. By automating the extraction of TTPs, the system provides a solution to the challenges of modern cybersecurity threats.

The system’s ability to maintain high accuracy across 2,050 verified decisions demonstrates that AI can be a reliable partner in cybersecurity when properly grounded. The implementation of Knowledge Graphs provides the transparency for human analysts to navigate threats with speed and precision. RAGRecon presents a critical advancement in security architecture, providing the interpretability required to defend complex digital and critical cybersecurity infrastructures.

Sources

Large Language Models for Explainable Threat Intelligence. (RAGRecon). Large Language Models, 7 Nov 2025. https://arxiv.org/abs/2511.05406

Large Language Models for Explainable Threat Intelligence. (RAGRecon). Large Language Models, 7 Nov 2025. https://arxiv.org/pdf/2511.05406

Disclosure: This Page may contain affiliate links. We may receive compensation if you click on these links and make a purchase.