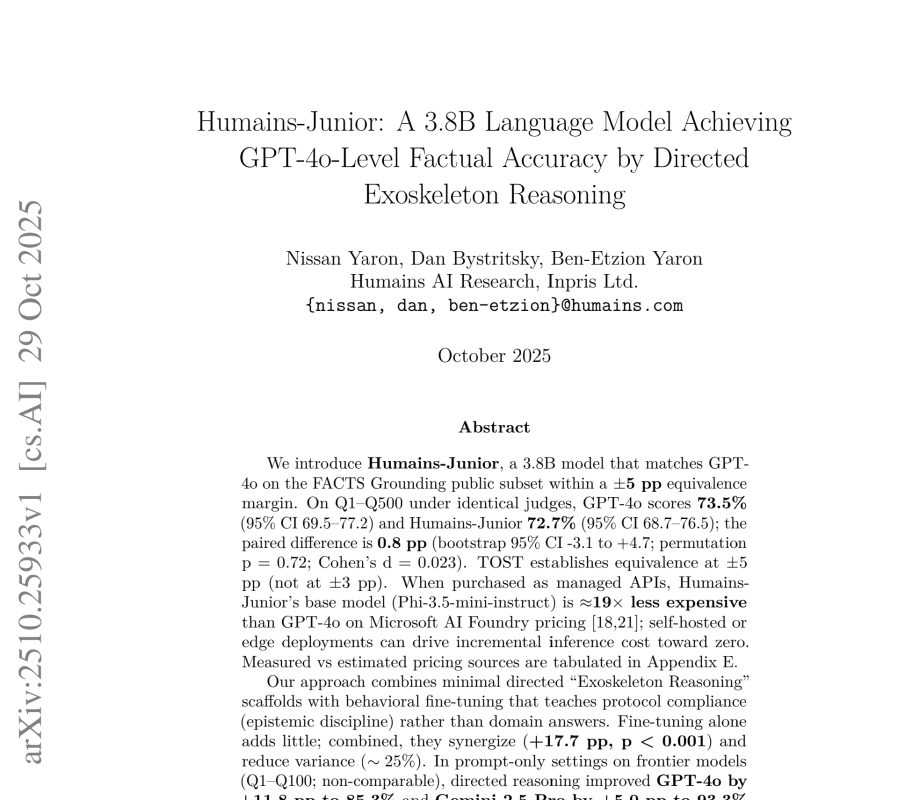

Humains-Junior Language Model Challenges GPT-4o on Factual Accuracy

A new research paper from Humains-Junior language model reportedly matches the factual accuracy of GPT-4o on a specific public subset. According to the paper the Humains-Junior language model achieves this performance through an innovative method called “Exoskeleton Reasoning.”

Humains-Junior Language Model Challenges GPT-4o on Factual Accuracy Read Post »