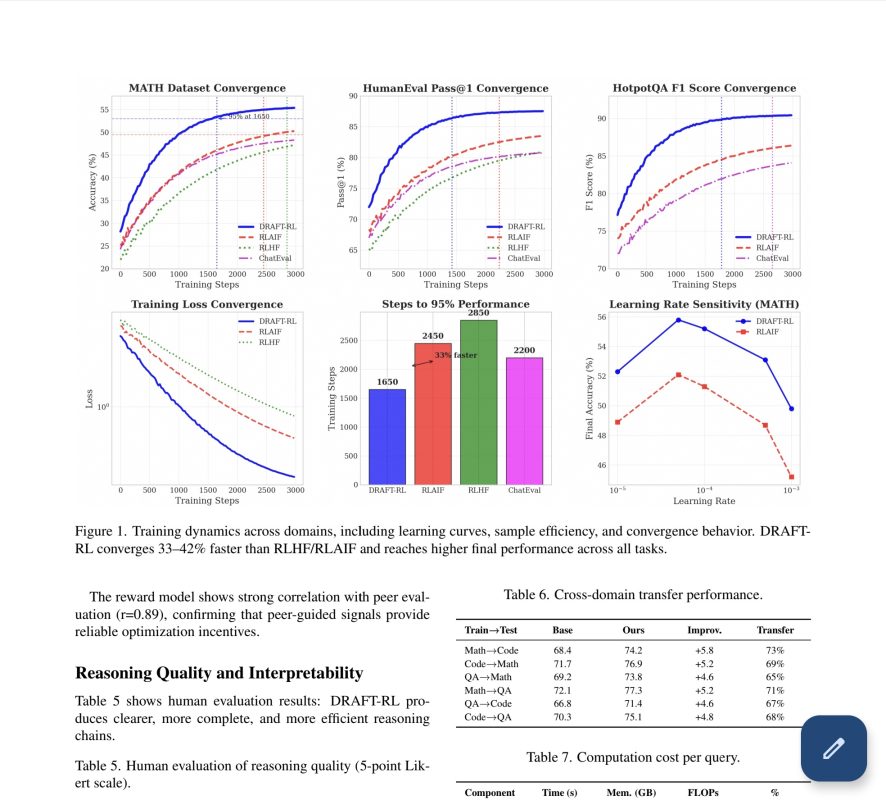

DRAFT-RL The First LLM Evaluation Framework to Integrate Structured Reasoning with Multi-Agents

DRAFT-RL is a evaluation framework fort LLMs designed to address critical limitations in LLM-based reasoning systems by integrating Chain-of-Draft (CoD) reasoning with multi-agent reinforcement learning.