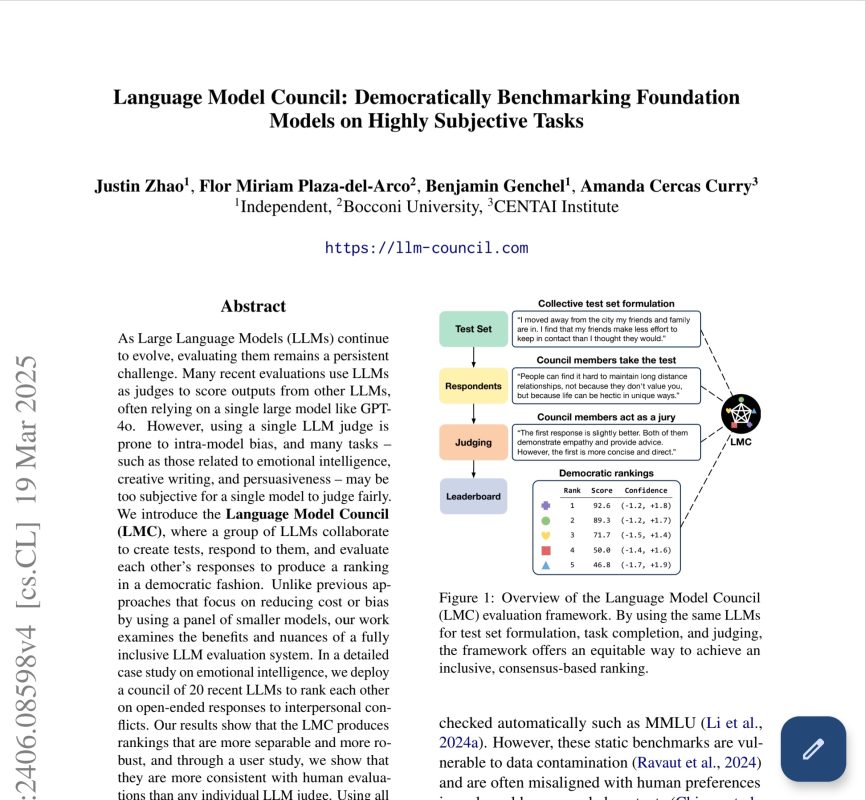

How Did 20 LLMs Dethroned GPT-4o and Reveal the Flaws in AI Leaderboards

The Language Model Council research suggests that the top spot on any given leaderboard might be an artifact of evaluation design rather than a reflection of superior, generalized capability.

How Did 20 LLMs Dethroned GPT-4o and Reveal the Flaws in AI Leaderboards Read Article »