Transparency & Methodology: To provide our research and technical evaluations, we partner with marketplaces. If you click a link and inquire about an acquisition or make a purchase, we may earn a commission. We only recommend assets that meet our technical standards. [ Learn more about our review process.]

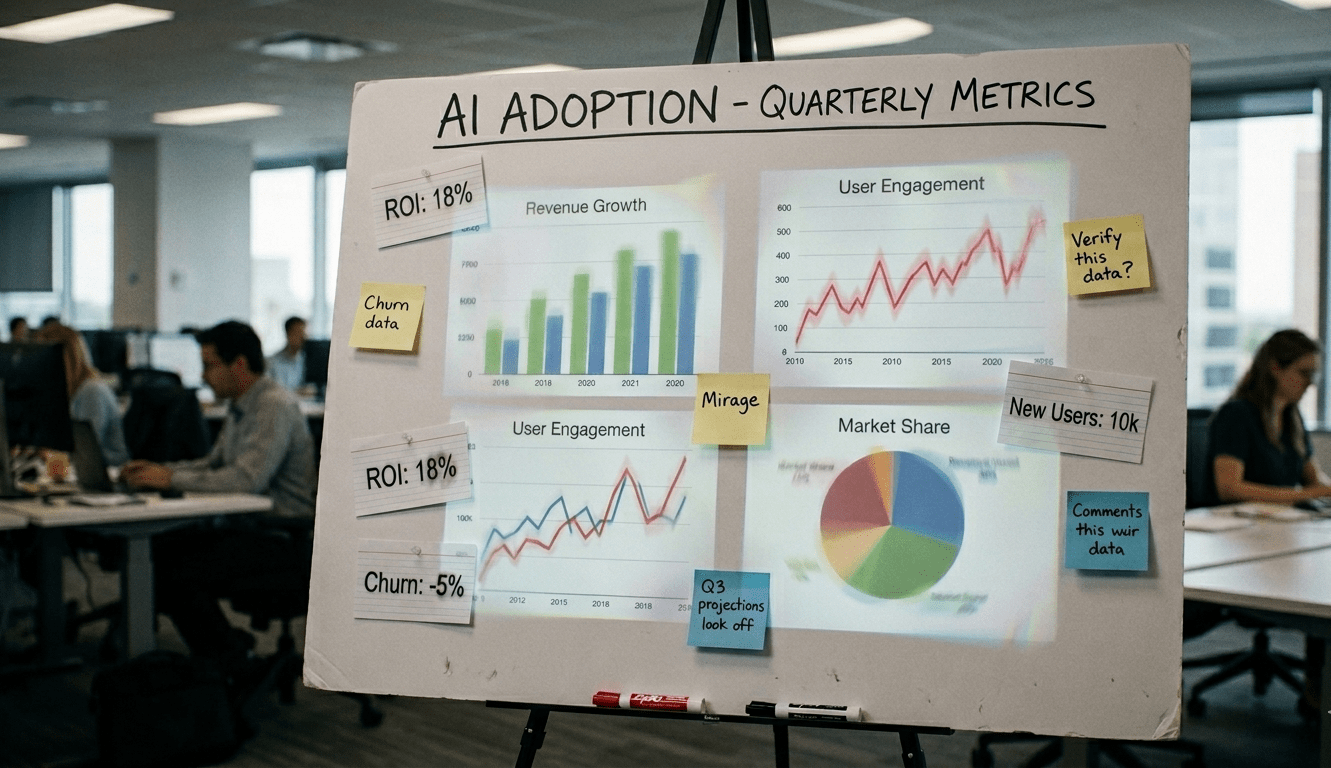

When we continue to move toward AI adoption, we often point to the skyrocketing number of companies integrating AI technologies into their daily workflows. On the surface, the data appear robust. Popular surveys tracking corporate AI adoption rely on samples ranging between 1,363 and 1,843 companies. However, as a data analyst will tell you, the share of companies using AI technology is only as reliable as the methodology supporting it. While the statistics suggest a massive shift in the corporate landscape, a closer look at how AI adoption is measured reveals a more nuanced, and perhaps different, reality. To understand the true state of AI, we must move past the percentages and interrogate the methodological ambiguity of the data.

The Elastic Definition of AI Adoption

One of the most significant challenges in tracking AI trends is the lack of a technical definition for what it actually means to adopt technology. In major industry reports, the term is frequently left open to interpretation. Specifically, we asked organizations about their adoption of core technologies and received mixed results. Yet, even within technical categories, the spectrum of activity is vast. Because there is no strict standard, a company might be categorized as an AI user regardless of whether the technology is an experiment or a core operational driver.

Read More: M&A Hot List | The Top 10 Online Businesses Buyers Are Searching For

The lack of precision creates a significant hurdle for researchers attempting to establish statistical significance. When limited experimentation is weighted the same as widespread AI implementation, it becomes difficult to distinguish between an AI-powered organization that has restructured around deep learning and an AI-curious company that isn’t yet reliant on a single AI tool.

📈 Direct Intelligence Data

- Total Addressable Market: By 2030, AI agent-powered solutions are estimated to represent 60% of the total software market.

- Application Software Growth: The application software market is projected to reach $780 billion by 2030, representing a 13% compound annual growth rate.

- Multimodal AI: The global multimodal AI market, integrating text, vision, and sensors, is projected to reach $10.89 billion by 2030.

- Sector-Specific Value: Banking and retail are expected to capture the highest value from GenAI. Banking could see an additional $200 to $340 billion in annual impact.

The One-Function AI Threshold

The bar for being classified as an AI-using company is surprisingly low. Under current survey criteria, an organization is considered an AI adopter if it has implemented the technology in just one of eleven recognized business functions. These functions include:

- Service Operations

- Product and Service

- Risk and Compliance

- Supply Chain Management

- Software

A one-function threshold serves as a proxy for presence, but it often obscures the lack of depth in corporate-wide AI maturity. There is a profound difference between low-barrier functions and high-value operational functions. For instance, a company using a basic AI tool for document review is counted the same as a company that has customer management through predictive modeling. This low entry bar likely inflates the perception of global AI integration. In-turn, highlighting a digital presence rather than a deep, cross-functional transformation.

Related: Why Wrapper Startups See Lower Margins Than Most Startups With IP

.

Conclusion: A Moving Target for a New Era

Measuring the progress of artificial intelligence is as complex as the technology itself. We are currently navigating a landscape defined by elastic definitions, low functional thresholds, and the statistical complexities of global weighting.

As we move forward, our benchmarks must evolve. We are entering the era of multimodal capabilities that integrate text, images, and audio. Furthermore, we must begin measuring computational intensity as AI systems are defined by their training computation. As systems demand higher computational overhead, our metrics should shift toward measuring cross-functional integration rather than simple presence.

Disclosure: This Page may contain affiliate links. We may receive compensation if you click on these links and make a purchase. However, this does not impact our content.